Contents:

Overview

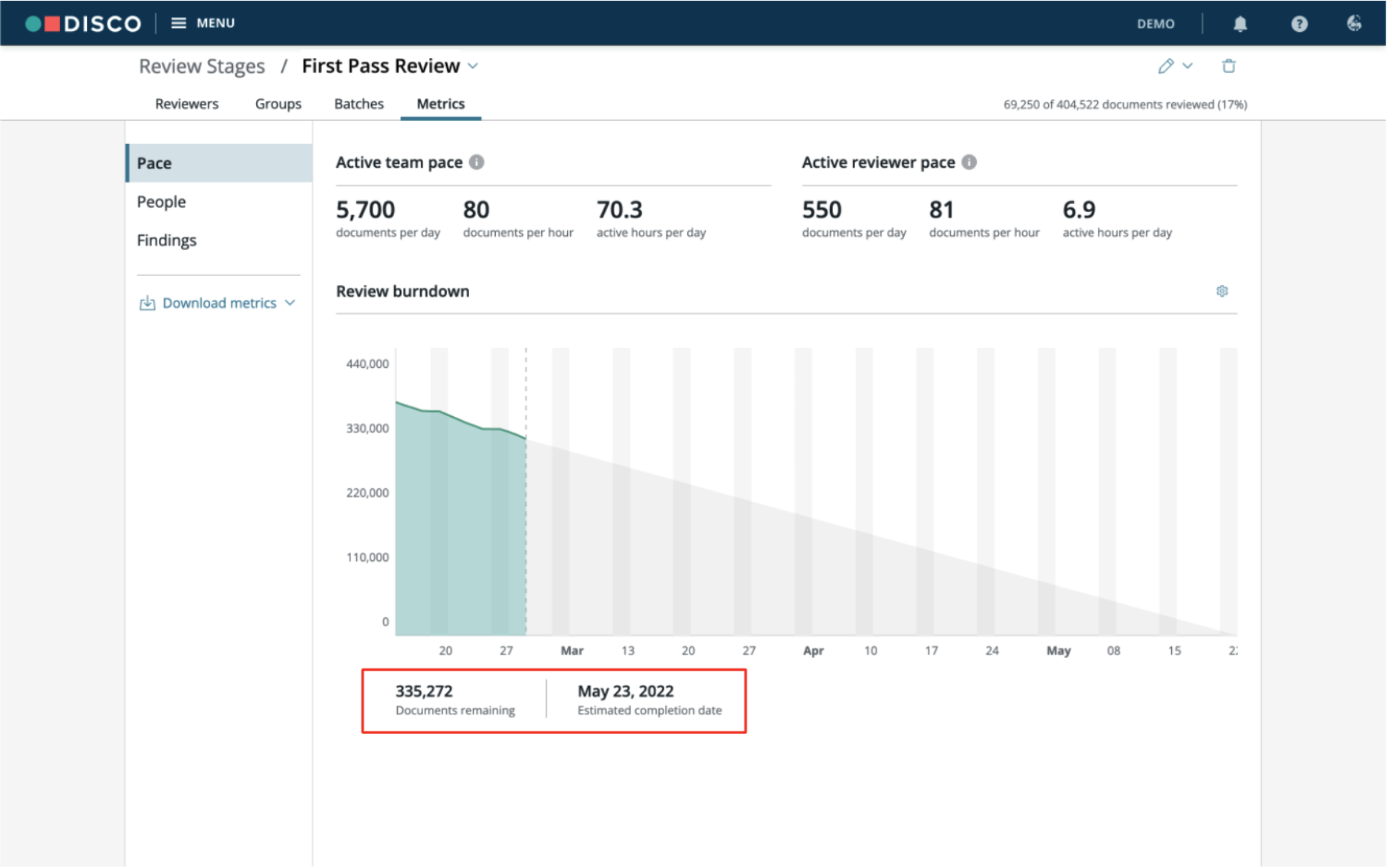

Review stages provide you with the ability to create, organize, prioritize, and manage your document review. Review stage metrics further assist you in the management process by providing you with a deeper understanding about the pace of review, tag rates, and overall findings. This information can help you not only with staffing and budgetary considerations, but also with identifying and correcting anomalies early in the review process.

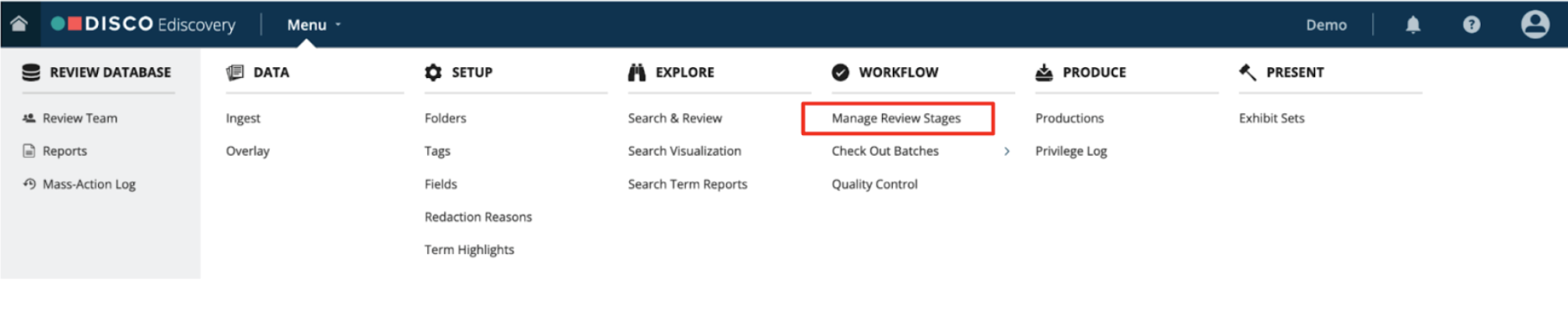

To view metrics, in the DISCO main menu, click Manage Review Stages.

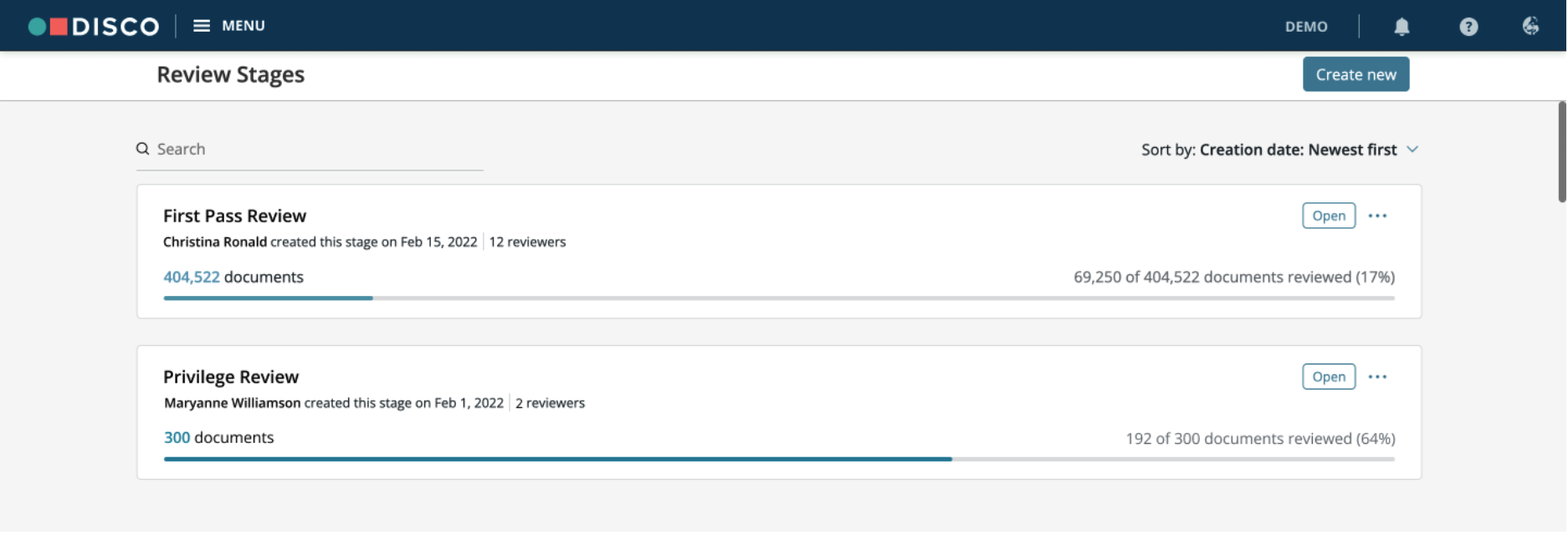

Review metrics are unique to each review stage. To see the metrics for a particular review stage, click Open on a review stage card, and then click the Metrics tab.

The metrics are broken down into three areas:

- Pace – Displays the team’s overall review pace along with the median pace of active reviewers. DISCO will show the number of remaining documents and estimate how long the review will take based on the current review pace.

- People – DISCO provides charts that show reviewer pace (active hours and documents per hour) and tagging rate by reviewer. These charts provide insight into the overall review, and highlight outliers among your review team.

- Findings – The percentage distribution of tags applied within the stage. Double-click a tag to review the associated documents.

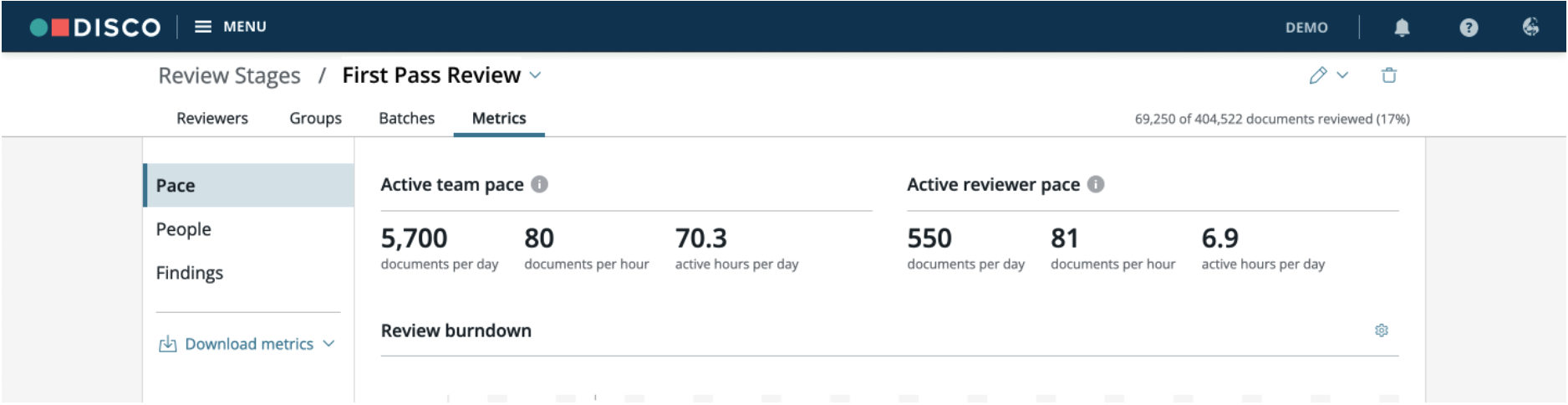

Pace

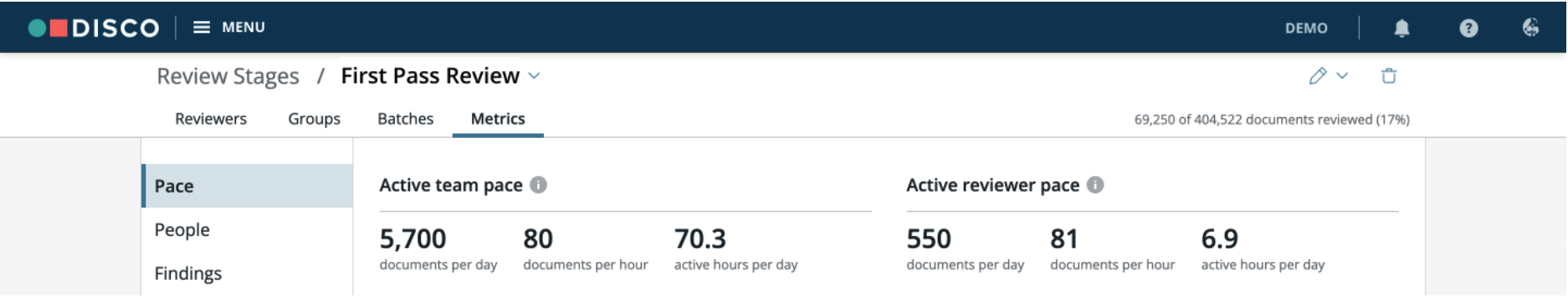

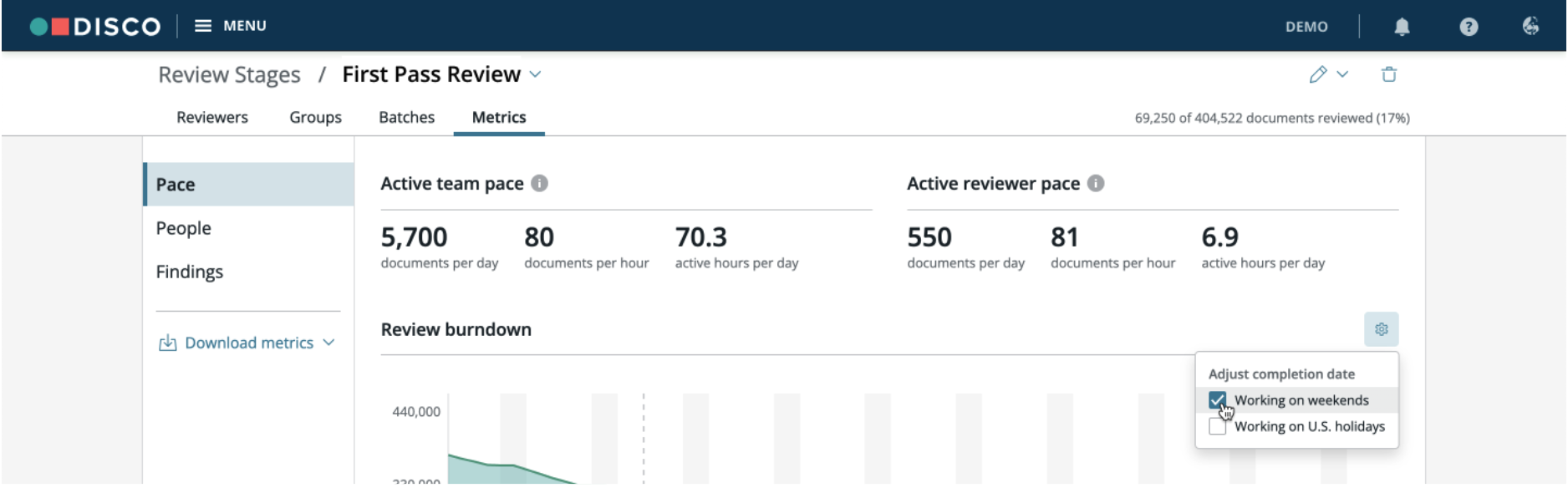

The Pace tab provides insight into speed of review and projects the date of completion based on your review team’s recent performance. With this estimate, you can assess whether the current number of hours and/or reviewers is adequate in meeting your deadlines.

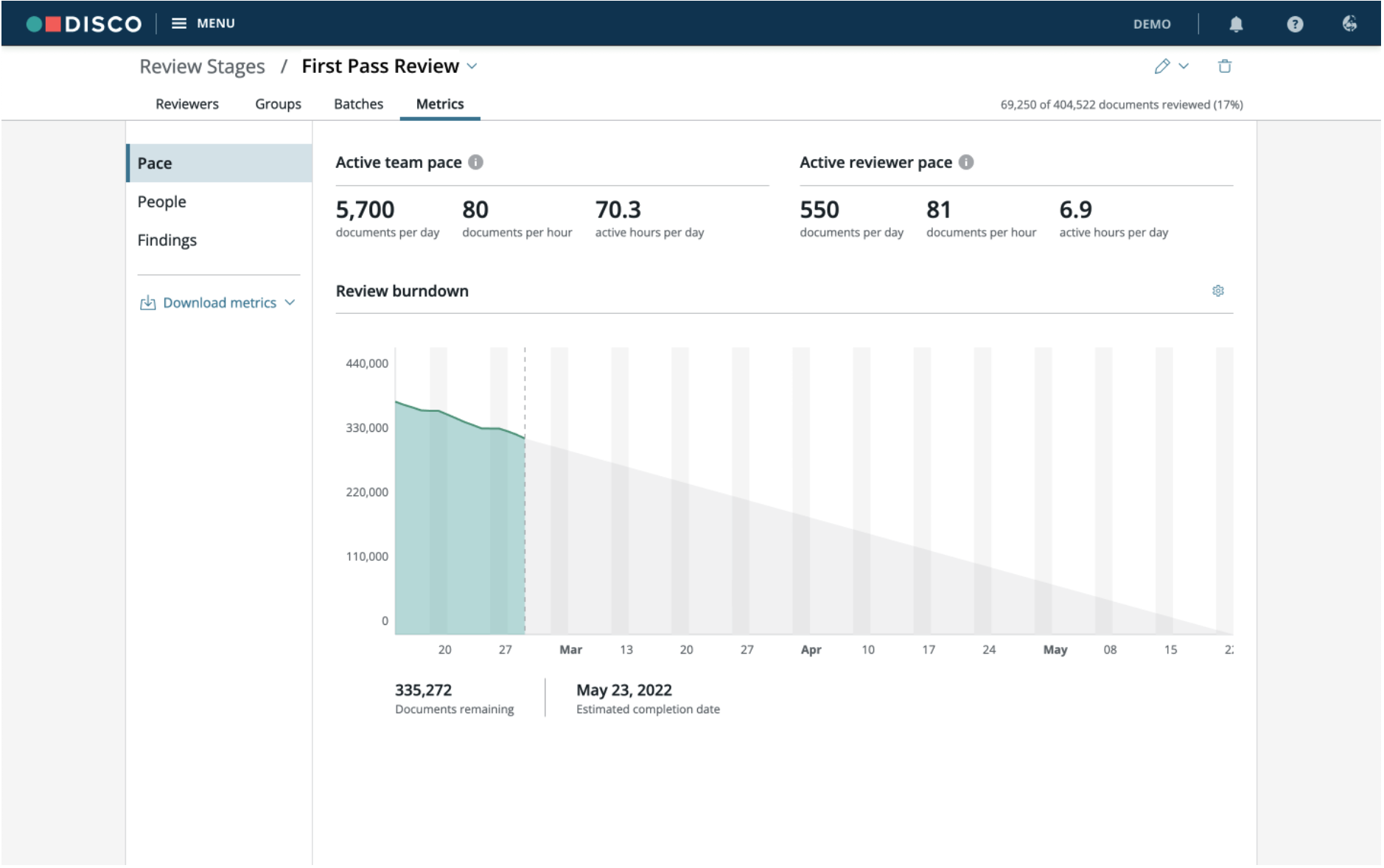

Documents remaining - This count is the remaining number of documents in the review stage that have not yet been marked as reviewed.

Estimated completion date – This is calculated based on the recent Active Team Pace and the number of unreviewed docs remaining in the review stage. By default, the system assumes that the team will not be working on weekends or holidays, however, you can select and deselect the Working Weekends and Working Holidays checkboxes and DISCO Review will adjust the completion date. Note: The holidays are preset to the standard United States federal holiday calendar.

Active Team Pace – The average pace over the past 7 days, excluding days that are less than 20% of the 7 day average. The pace is broken down by documents reviewed per day, documents reviewed per hour, and active hours per day. By limiting the data to the last 7 days, the calculations better reflect normal fluctuations in pace, such as changes in the size of the review team, reviewers becoming familiar with the protocol, or document sources varying in complexity. Thus, DISCO's metrics more accurately reflect the most current outlook with fluctuations in pace automatically considered.

Active Reviewer Pace – The median pace of all reviewers who have reviewed at least one document in the past 7 days, excluding days that are less than 20% of the 7 day average. This exclusion allows for a more accurate assessment. For example, someone reviewing a handful of documents over the weekend or someone working only half a day will not be included. Both instances would make the overall pace go down, giving the illusion of lower productivity.

Active Team and Reviewer Pace data is the previous 7 day average, (as of the previous day), and is updated every 24 hours.

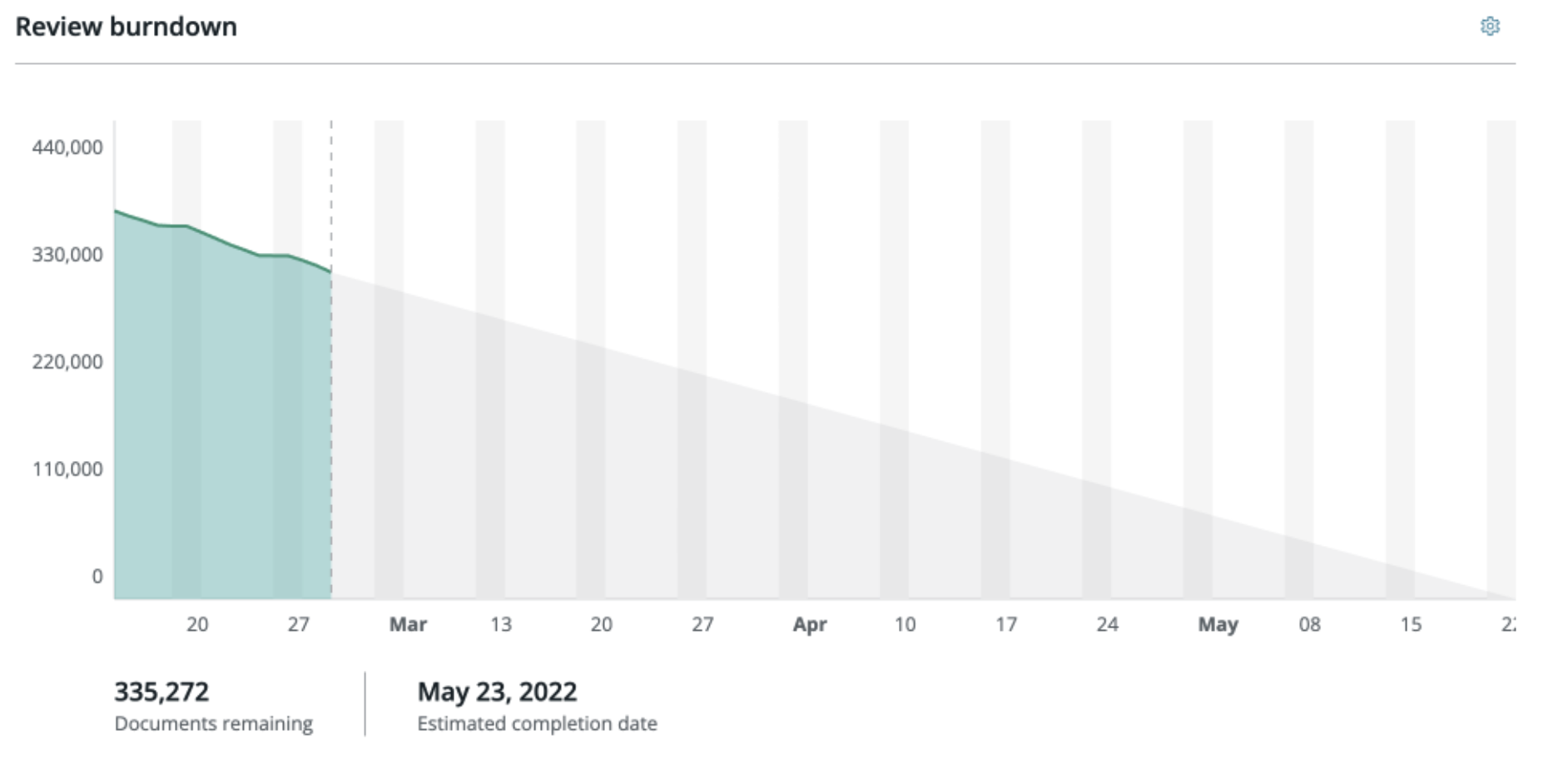

Burndown Chart – Provides a visual representation of the number of documents remaining to be reviewed each day until the estimated date of completion. You can click on any given day within the Burndown for further details, including the number of documents reviewed, documents remaining, documents added/removed, and active reviewers. If any documents are added or removed from the review stage, the corresponding changes would be reflected here. You can see the remaining document count to be reviewed and the estimated review completion date below the chart. The Burndown chart is updated with new data every 60-90 minutes.

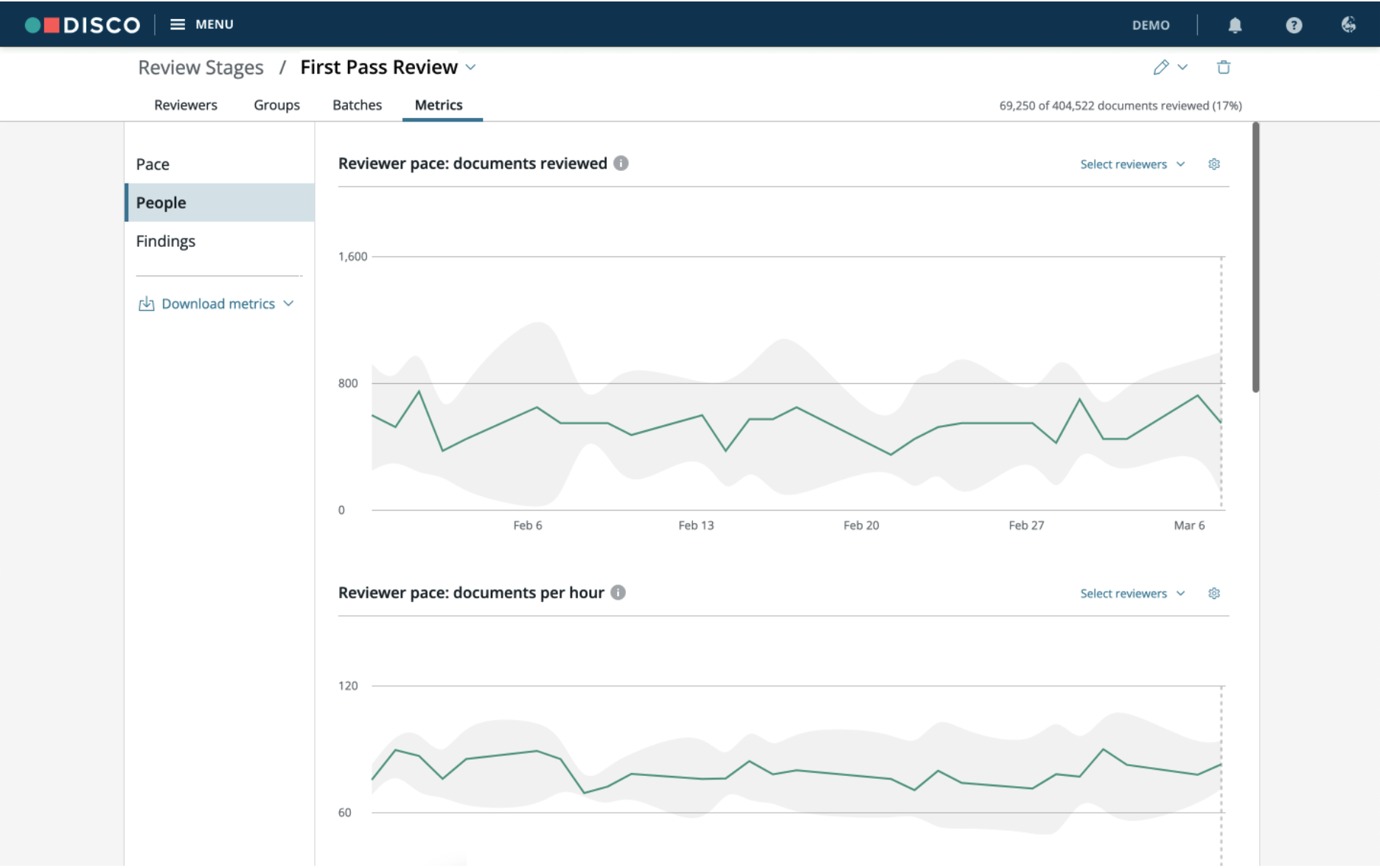

People

The People tab provides metrics that allow you to drill into metrics for specific reviewers, including number of documents reviewed, time spent reviewing, documents per hour and tagging behavior. People metrics data is updated every 10 minutes.

Reviewer pace: documents reviewed - This chart plots out how many documents each reviewer has reviewed each day. A document is considered reviewed once the user clicks “Mark Reviewed & Next” when reviewing a document in a batch.

Reviewer pace: documents per hour - This chart plots out how many documents each reviewer has reviewed per hour. This is calculated by dividing the number of documents marked as reviewed, by the number of hours spent reviewing.

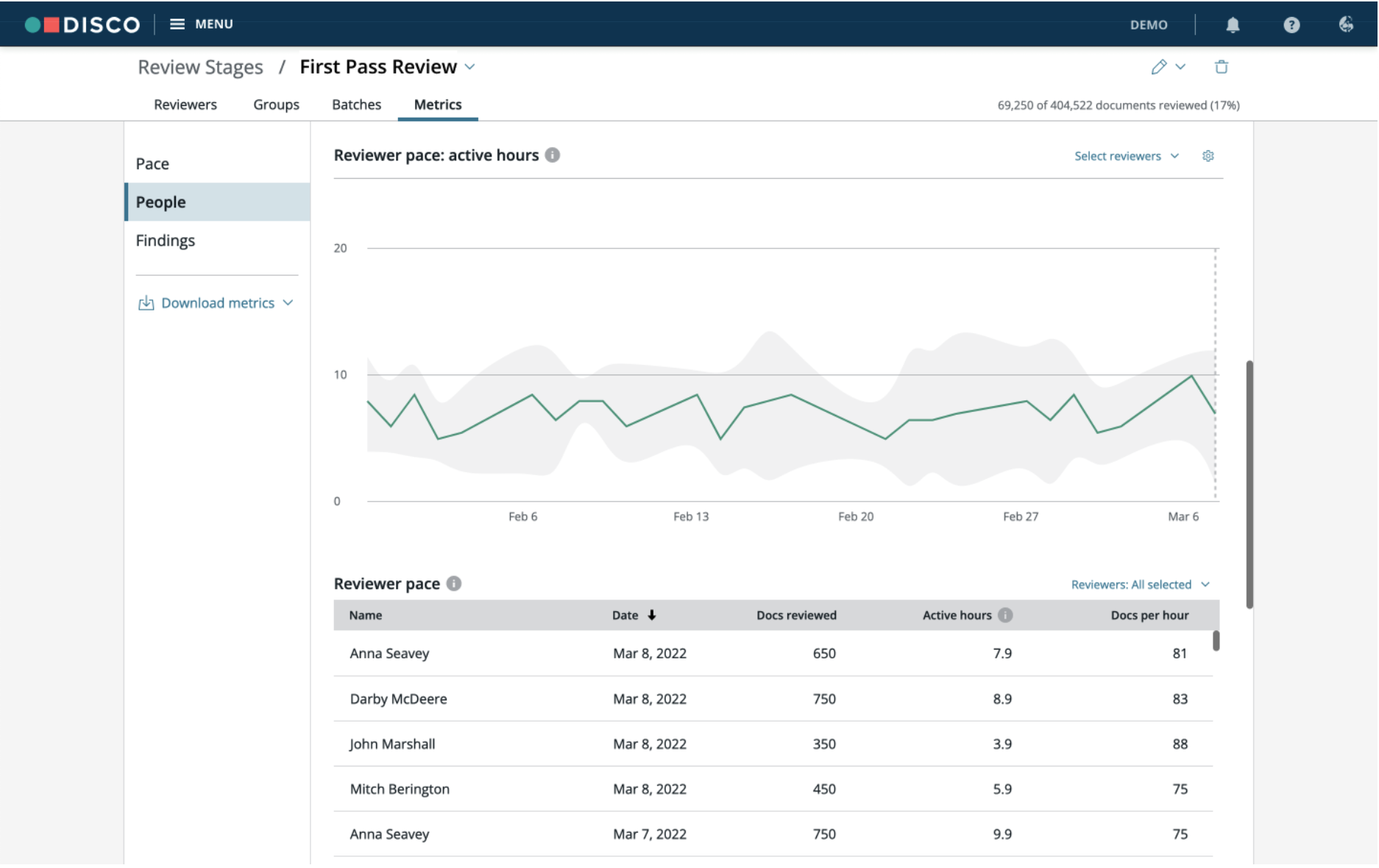

Reviewer pace: active hours - This chart plots out how much time reviewers spend actively reviewing in Review Stages. Active time within batches is tracked by different events. Events include mouse clicks, using the keyboard, applying tags, and applying redactions in the Document Reviewer. Activity tracking is paused when the reviewer is inactive for more than 6 consecutive minutes.

In addition to displaying the individual reviewer’s pace, you can click on any point on the chart to see the pace of the individual reviewer as well as the median review pace for a particular day.

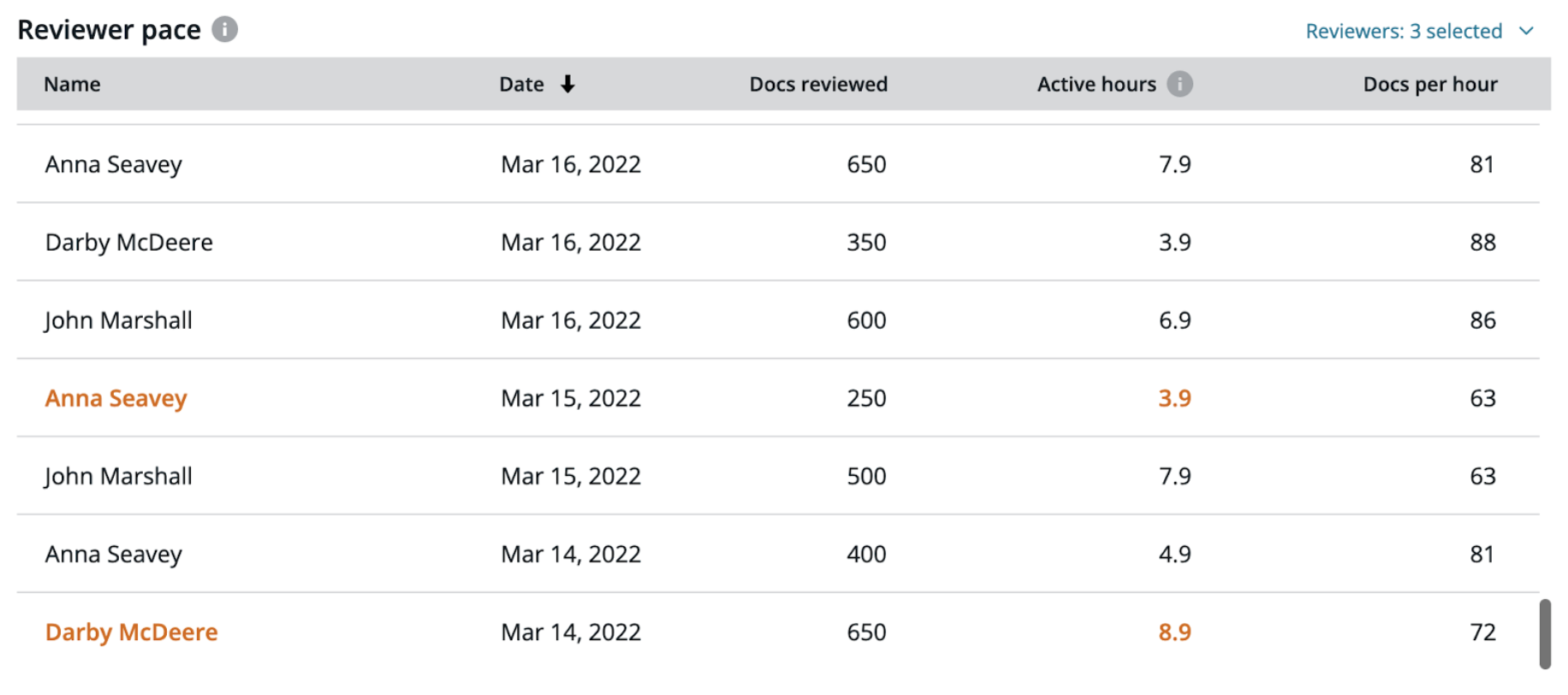

Reviewer pace table - The Reviewer pace table combines Documents reviewed, Active hours, and Documents per hour into a table format. You can sort data by Name, Date, Docs reviewed, Active hours, or Docs per hour by clicking on the column header.

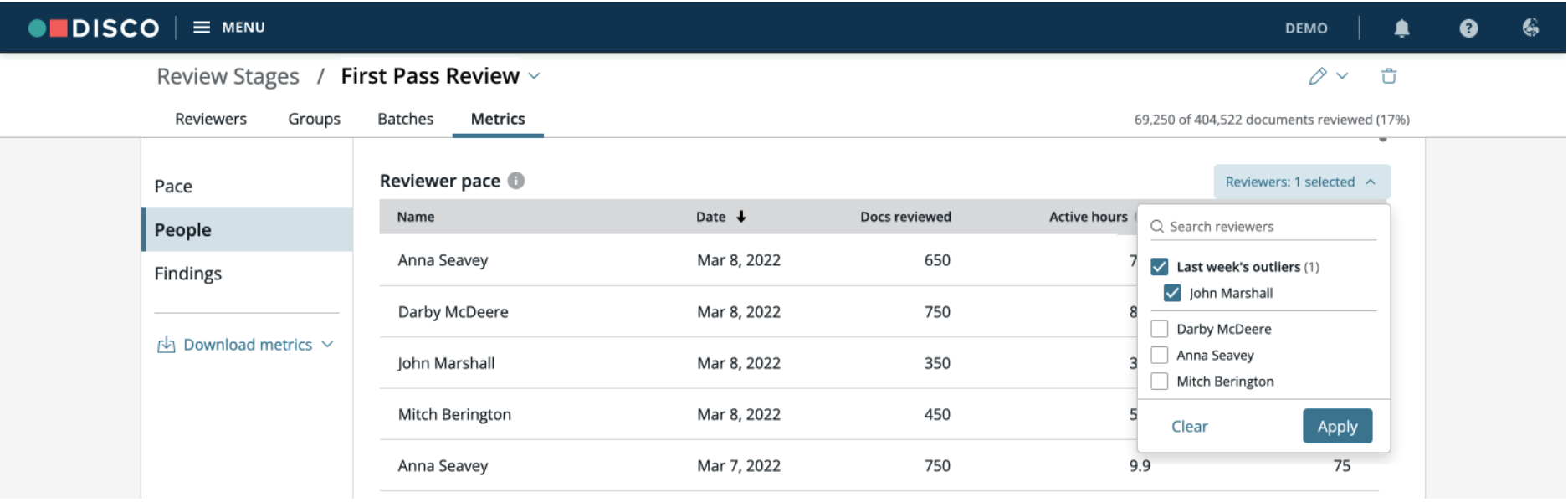

Click Select reviewers to select specific reviewers' or recent outliers' data. Outlier data will show in orange along with the reviewer name. For example, in the image below Anna Seavey is an outlier for Active hours on March 15th. Her name and “3.9” hrs under the Active hours column is shown in orange.

Outliers - Outliers are defined as any reviewer whose review pace fell outside the normal range over the past seven days, implying that their review pace is significantly higher or lower than their peers on a given day.

After selecting which reviewers and/or outliers you wish to focus on, DISCO will layer each chosen reviewer’s individual review pace onto the Reviewer Pace charts or will filter the list of reviewers in the table view.

Weekends & U.S. Holidays - You can enable or disable weekends and holidays so that DISCO will consider reviewers as working during those days. The holidays are preset to the standard United States federal holiday calendar.

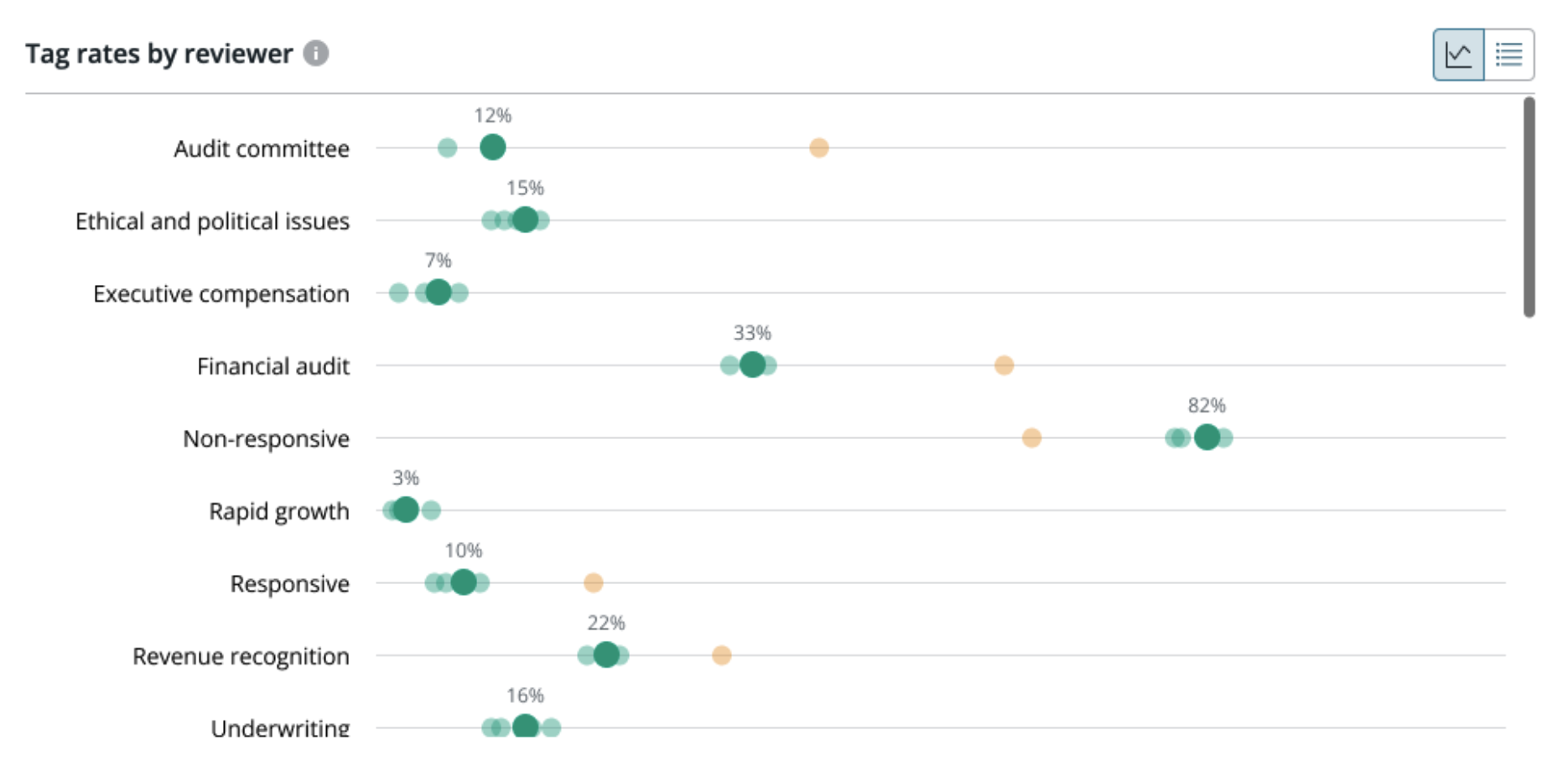

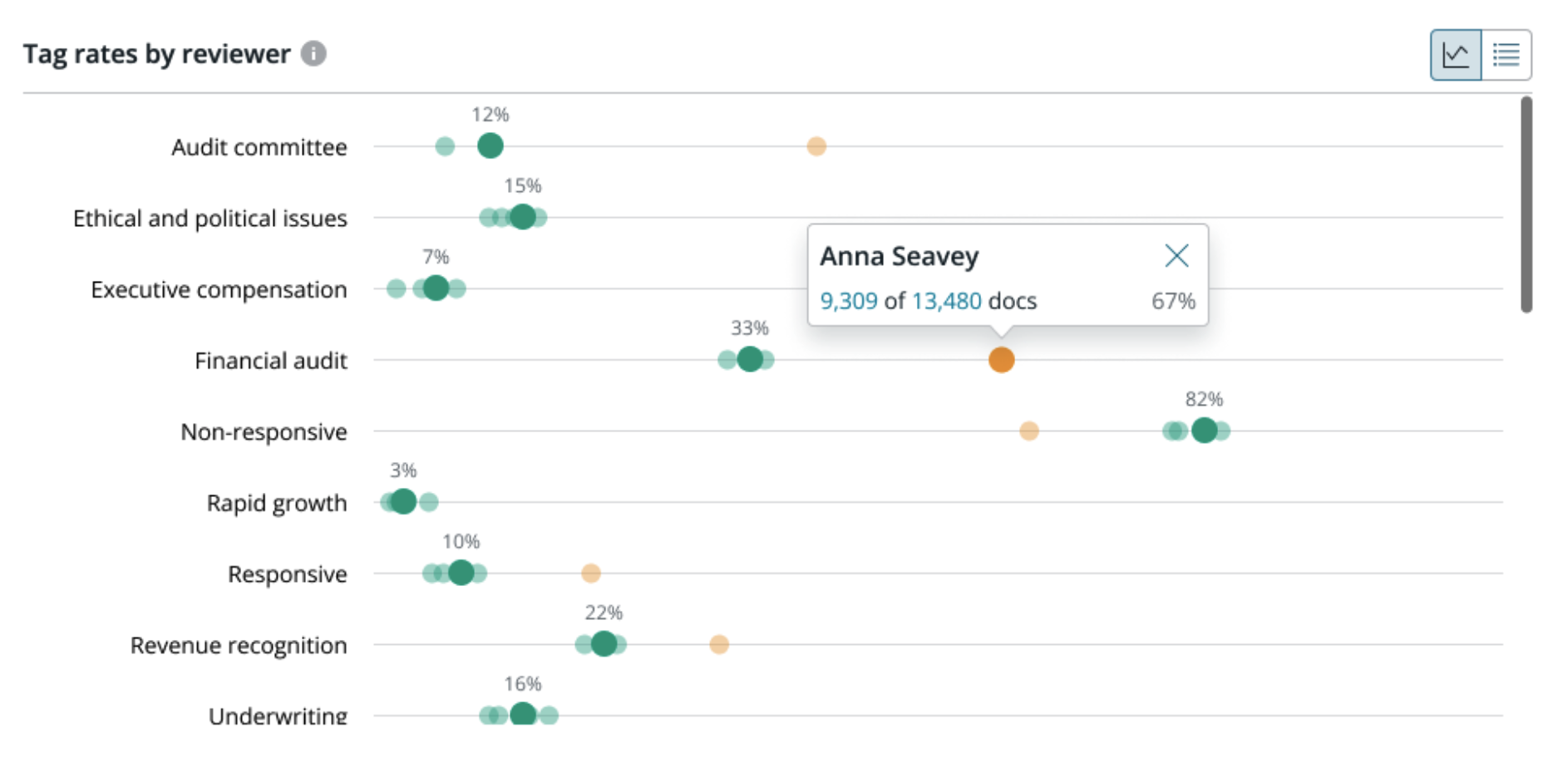

Tag Rates by Reviewer

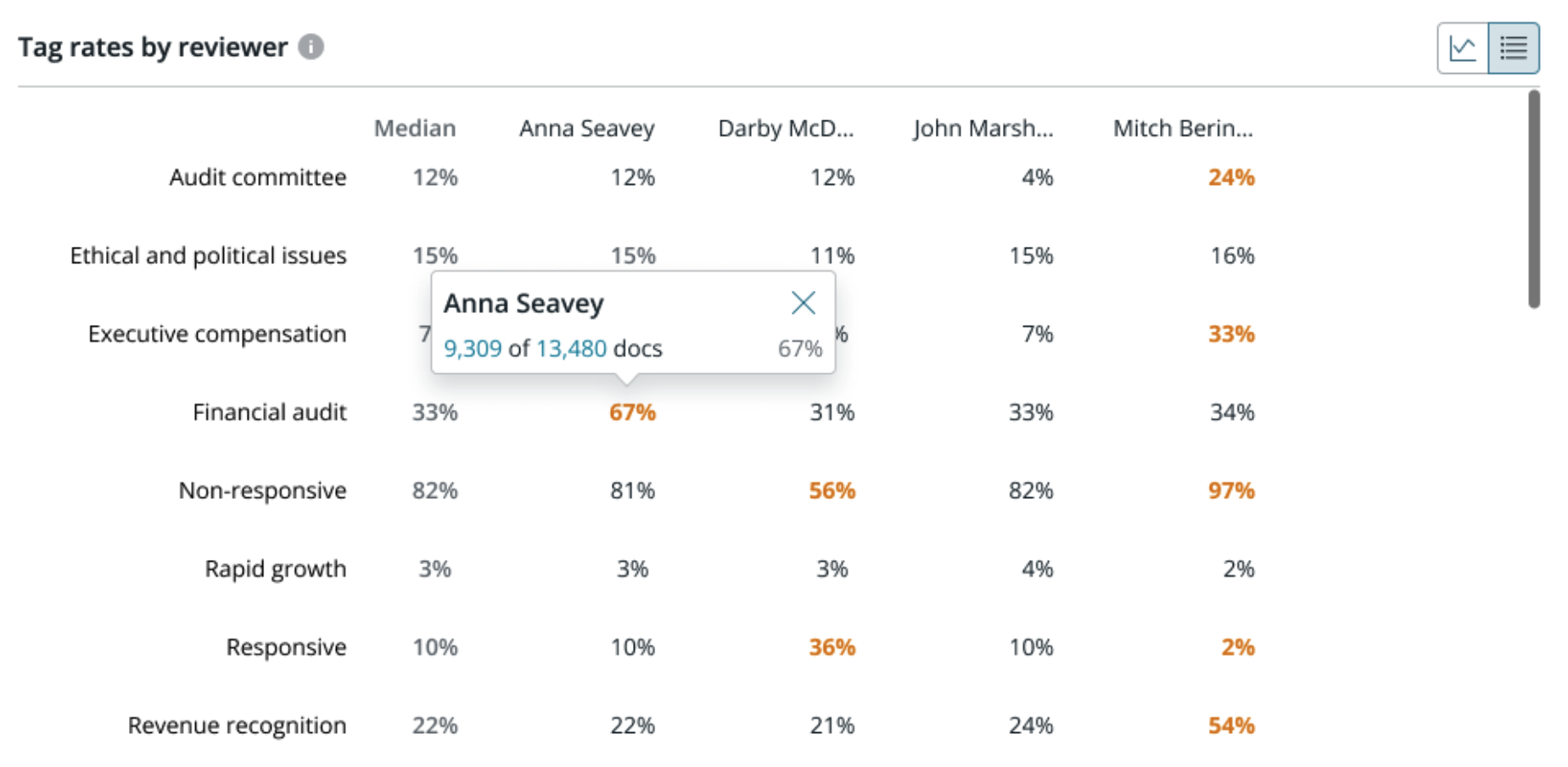

This area shows the median percentage each tag was applied along with applied percentages per reviewer.

For example, in the image below you can see the median application of the “Financial audit” tag is 33%. By clicking in the chart you can see that one reviewer has applied the “Financial audit” tag at a rate of 67%. DISCO Review makes it easy to identify such outliers by highlighting their data in orange. Such insight into tagging variations allows you to identify differences in understanding among reviewers.

One example of this would be the use of a Hot tag. Some reviewers tend to broadly apply such a tag, while others are more strict in its use. Being able to identify variations early on in the review will allow those reviewing or supervising the review to ensure that all reviewers are on the same page, lessening the differences in tag application by individual reviewer. This will cut down the time needed later for QC as well as within other review workflows such as deposition preparation.

If you prefer to view the information in columns, click the column viewer option.

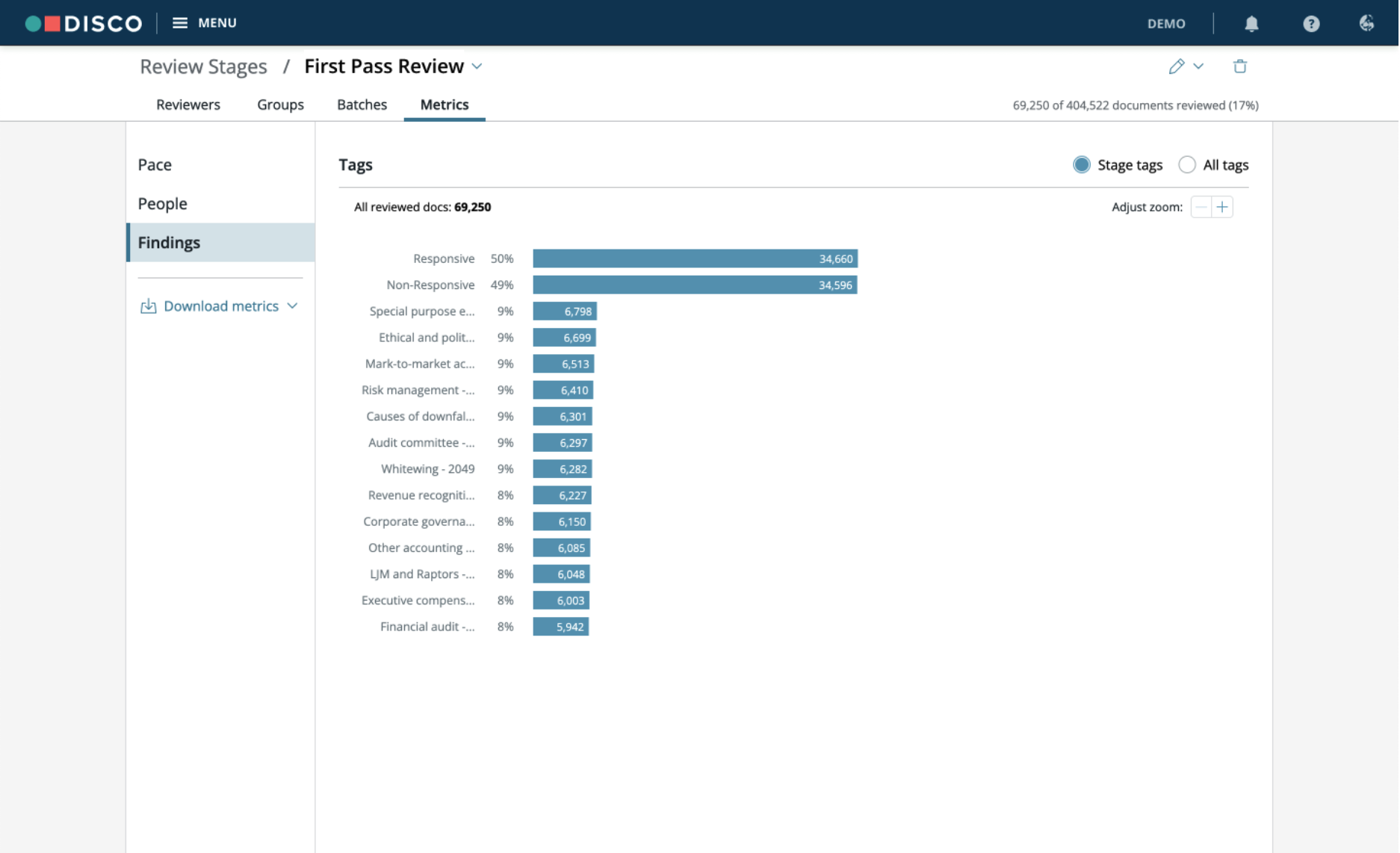

Findings

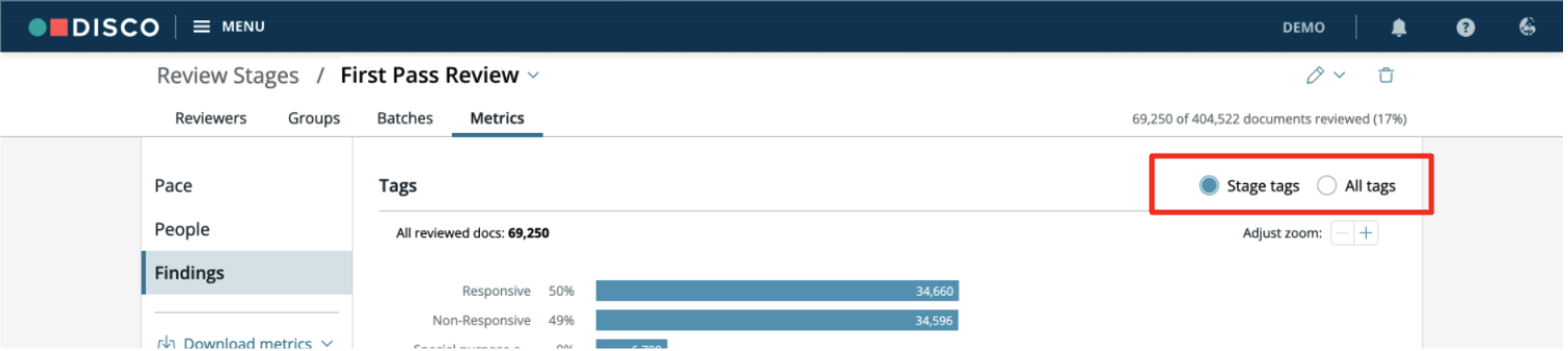

The Findings tab provides insight into the overall tag distribution for your review stage. Here you can see if your tagging aligns with any statistical sampling or other expectations. Findings tag distribution data is updated every 10 minutes.

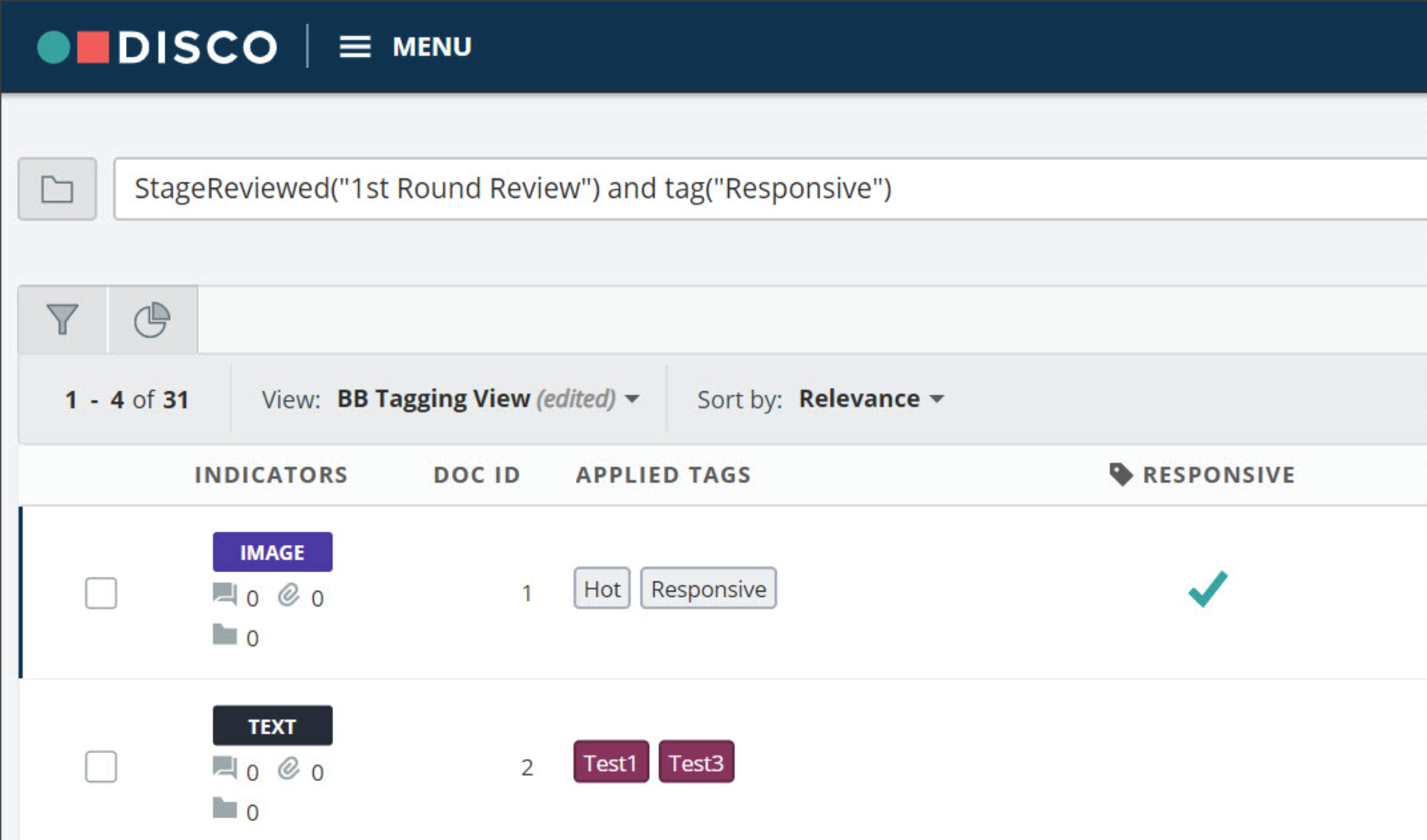

Click a tag name to view the reviewed documents with that particular tag. DISCO will pull up the selected documents in a search for your review. You can see tag application information and basic metadata information by scrolling through your document list. You can select any view to use for scrolling, utilizing custom columns. Further, you can open the documents to view them in the standard document viewer.

You can also toggle between only those tags exposed to reviewers (Stage tags) or all tags within the database on the Findings tab.

Export

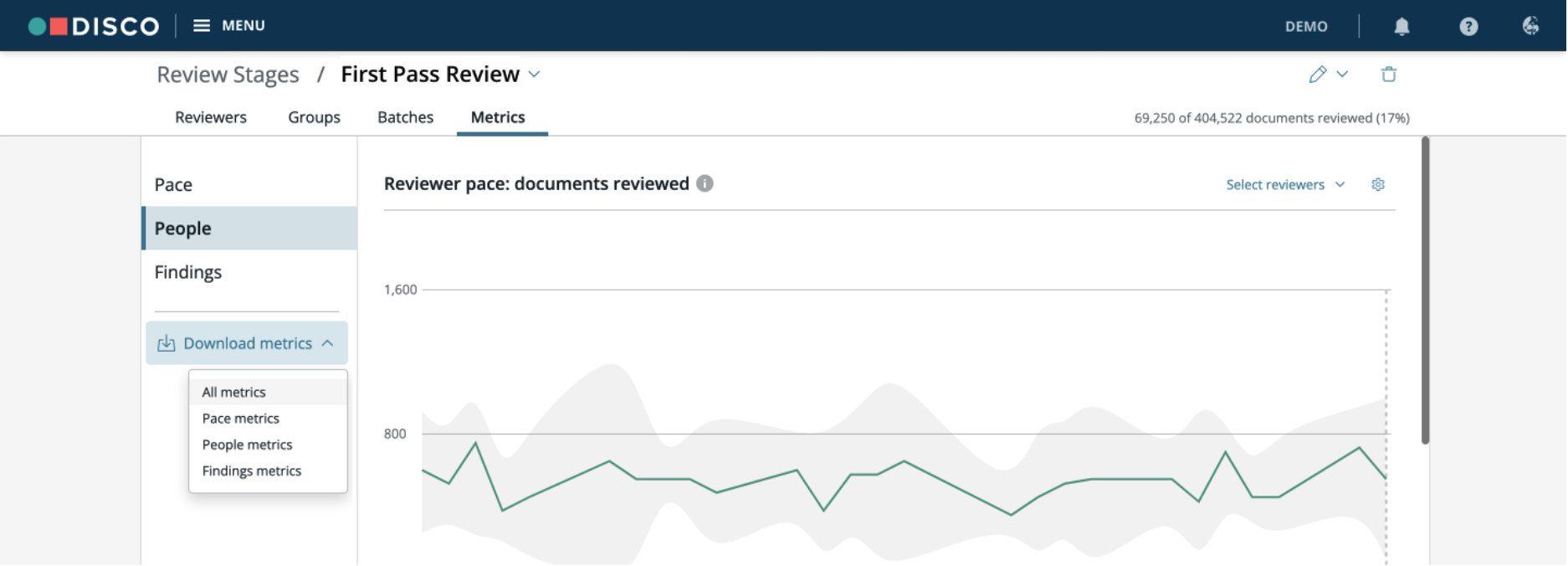

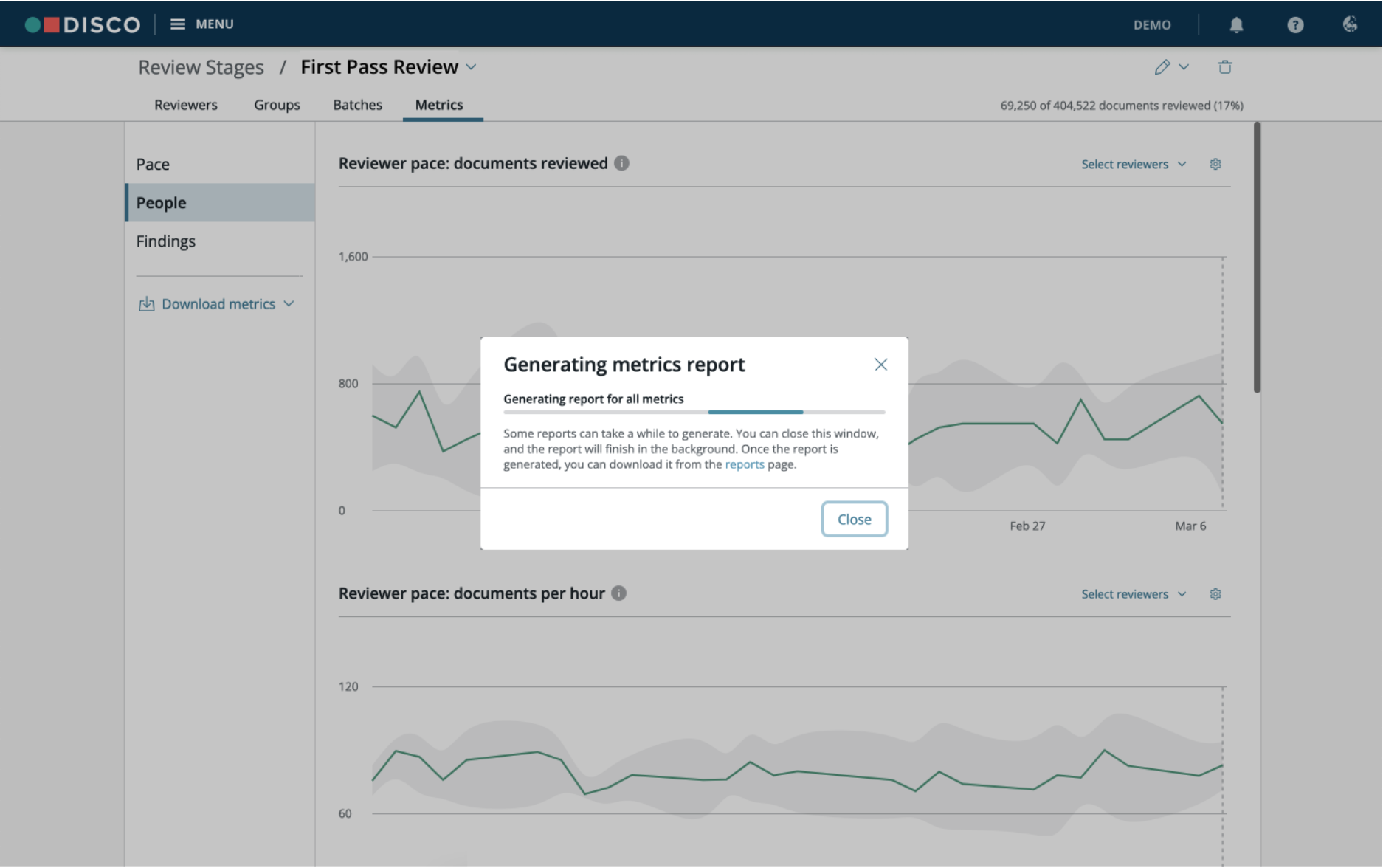

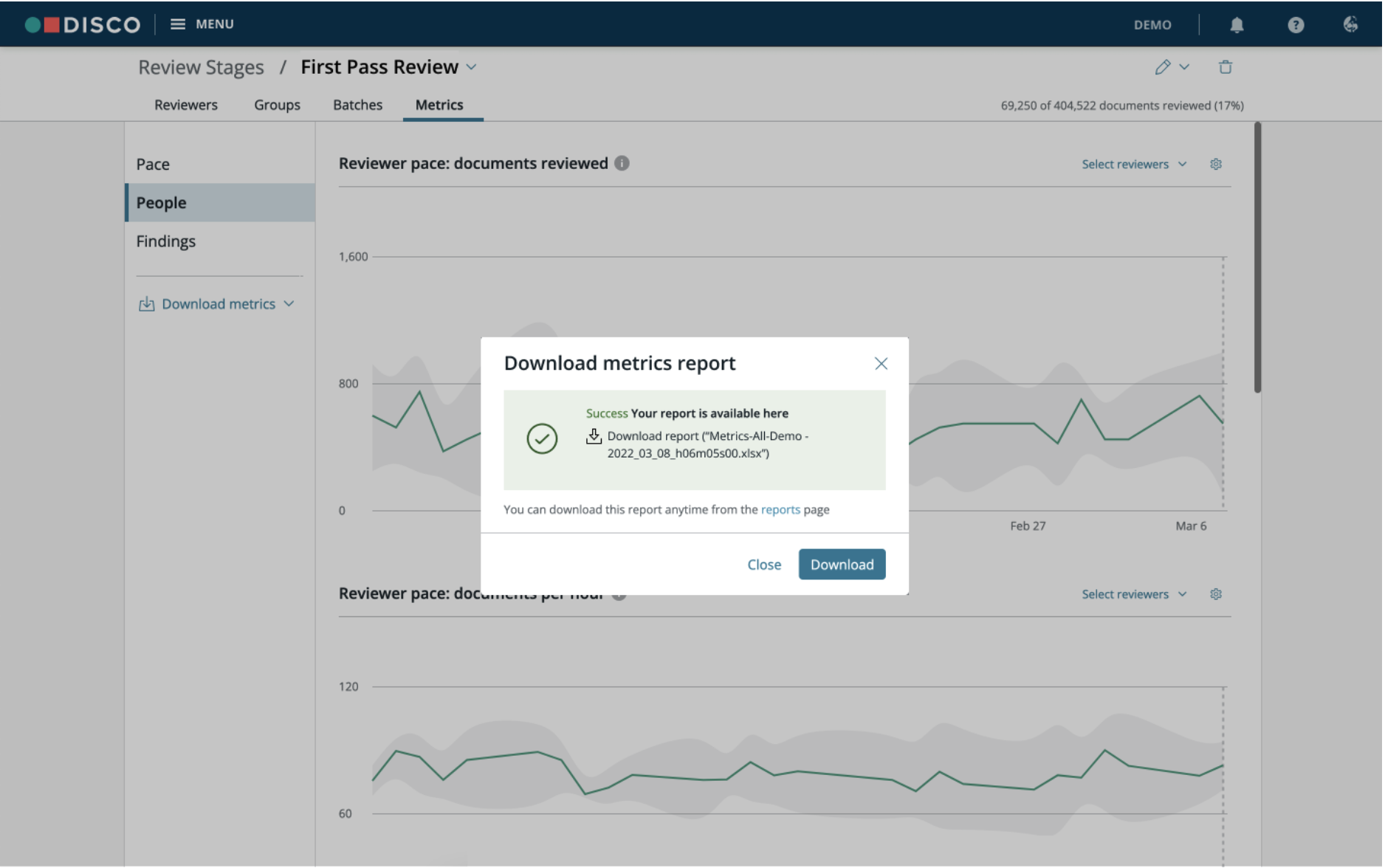

The underlying data for all the charts and tables in the Metrics section can be downloaded by expanding ![]() on the Download metrics dropdown on the left hand side, under the Findings tab. You can download All metrics data or only data for the Pace, People, or Findings tabs.

on the Download metrics dropdown on the left hand side, under the Findings tab. You can download All metrics data or only data for the Pace, People, or Findings tabs.

Selecting an option kicks off the download process and generates a .xlsx file.

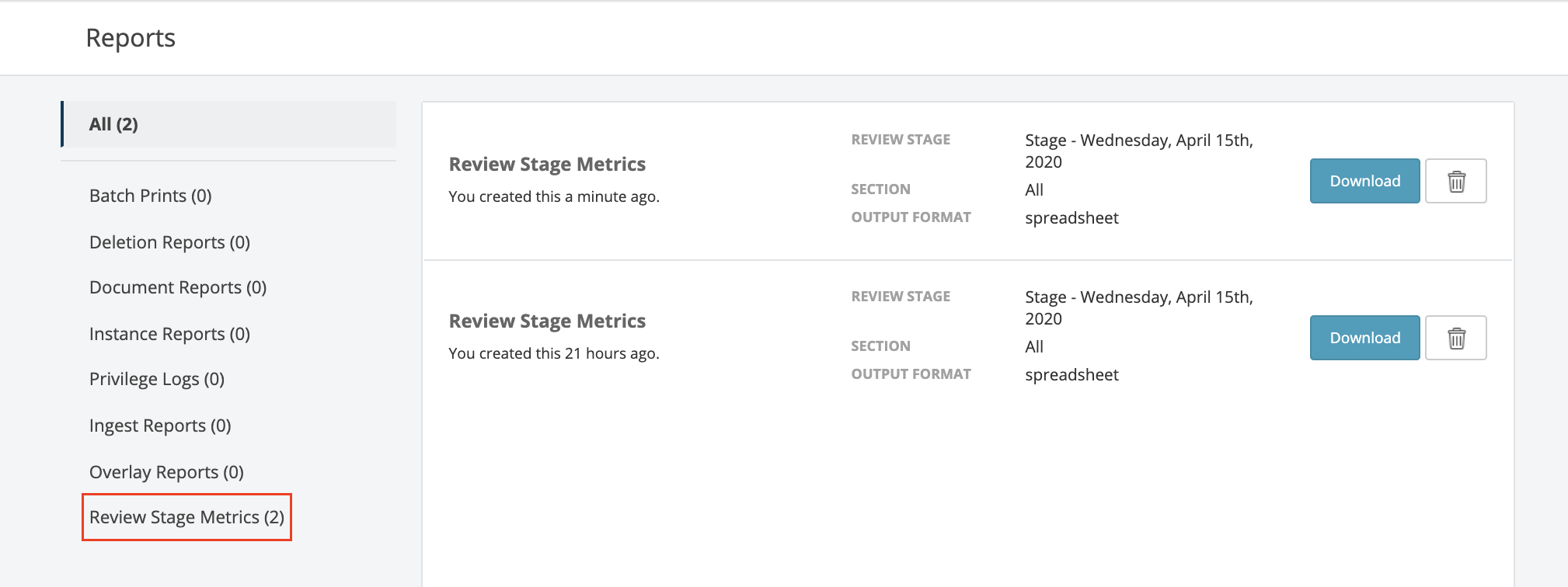

Once generated, the .xlsx file can be downloaded to the computer. It is also available for downloading at a later time in the Reports section under Review Stage Metrics.

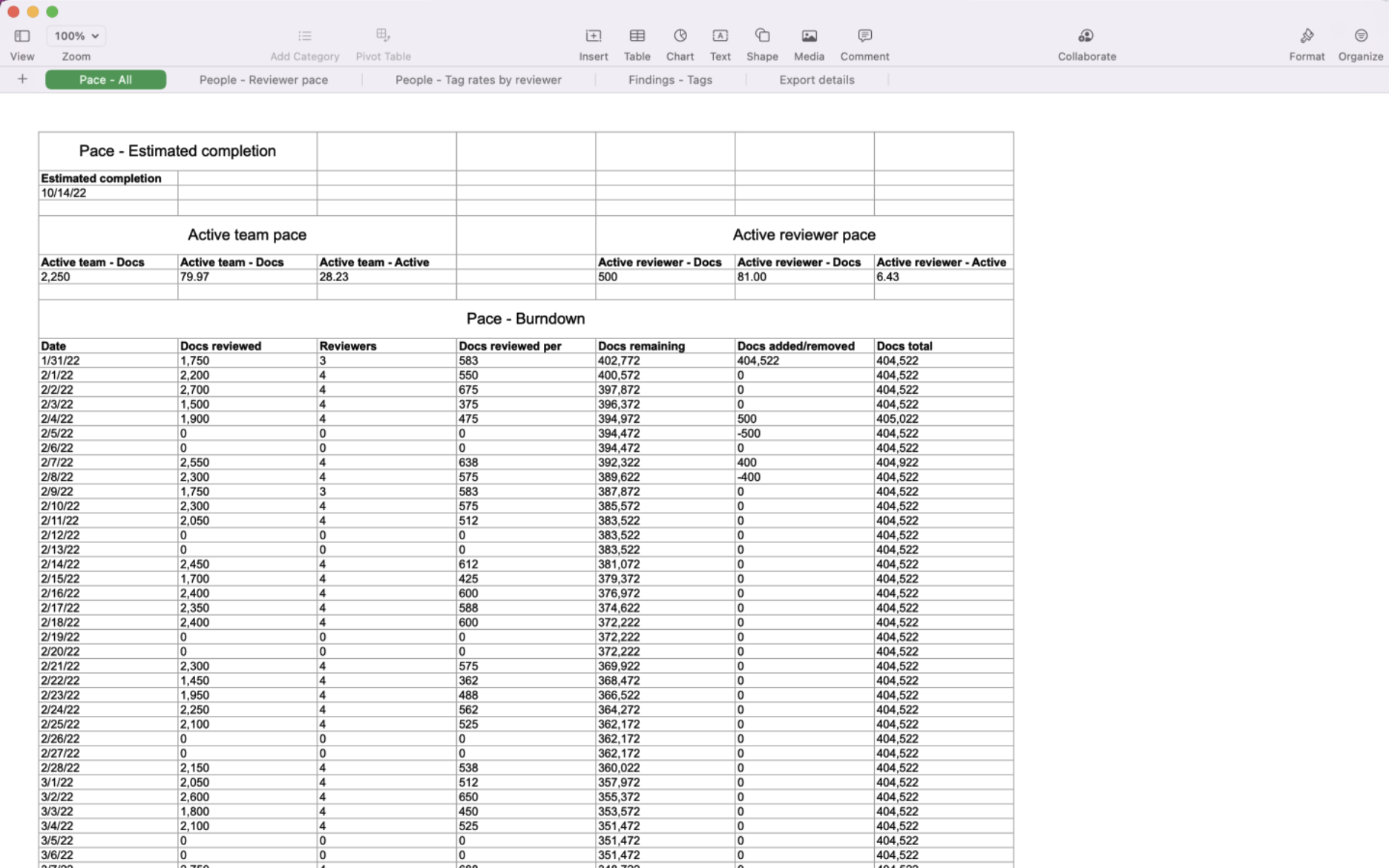

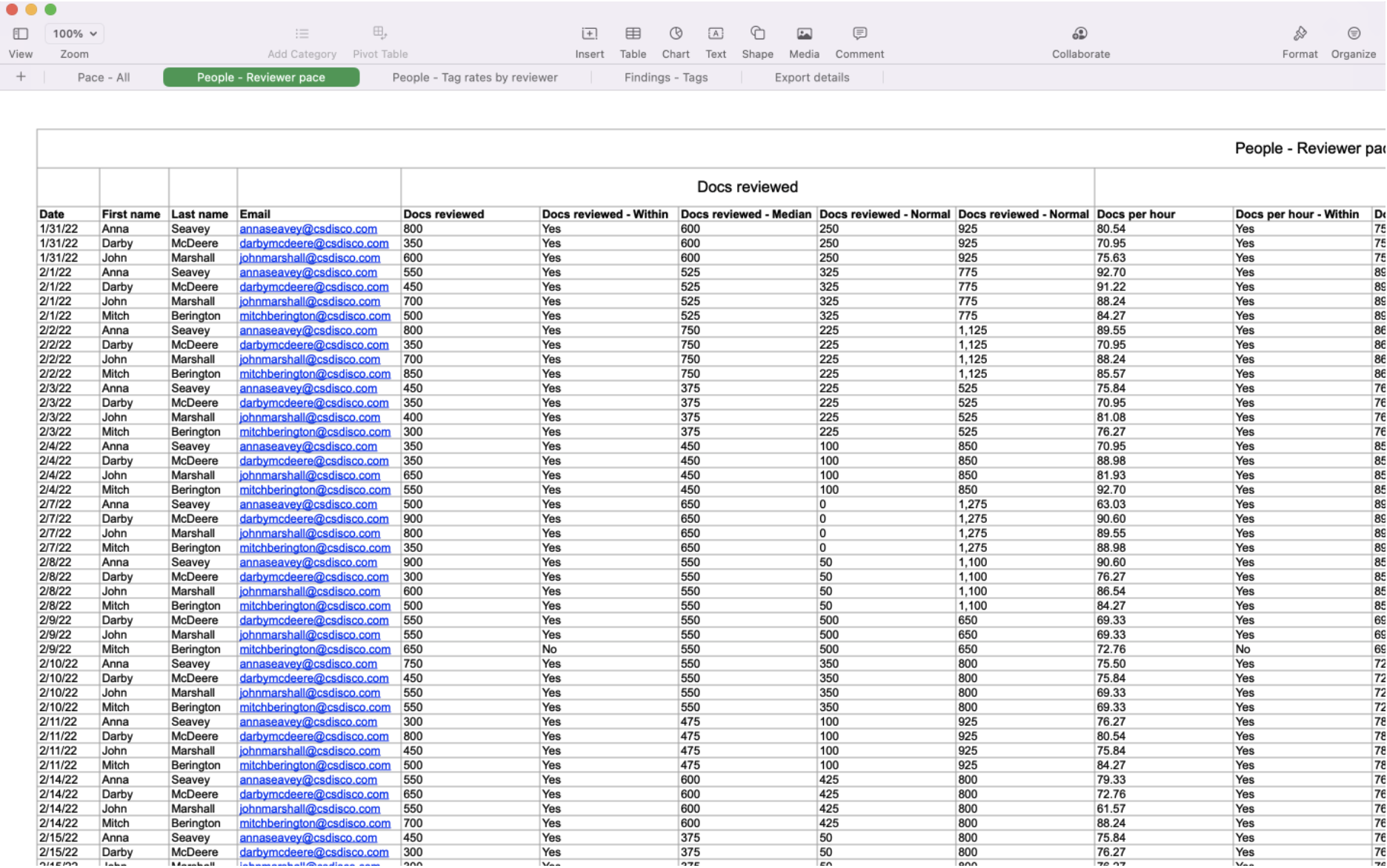

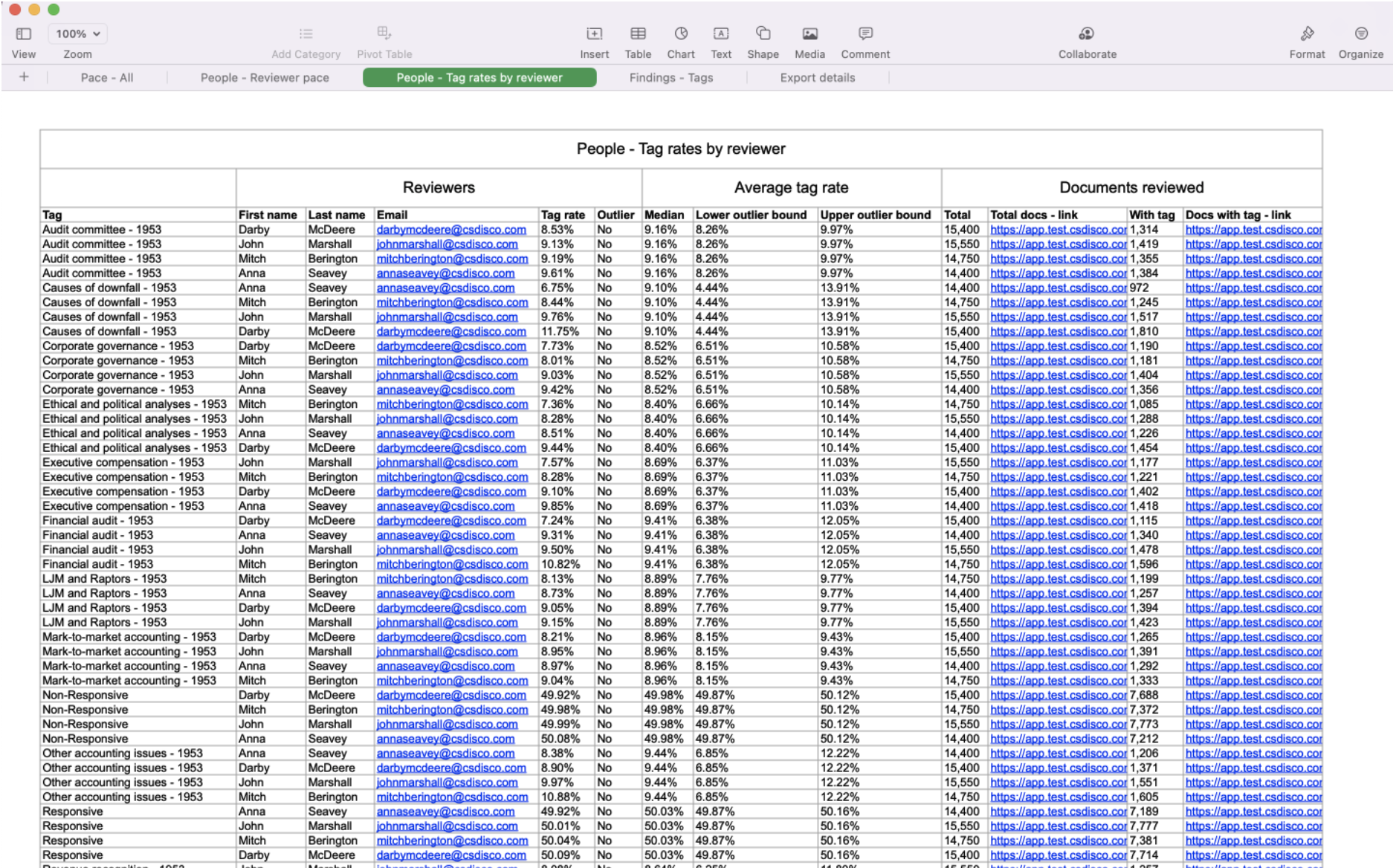

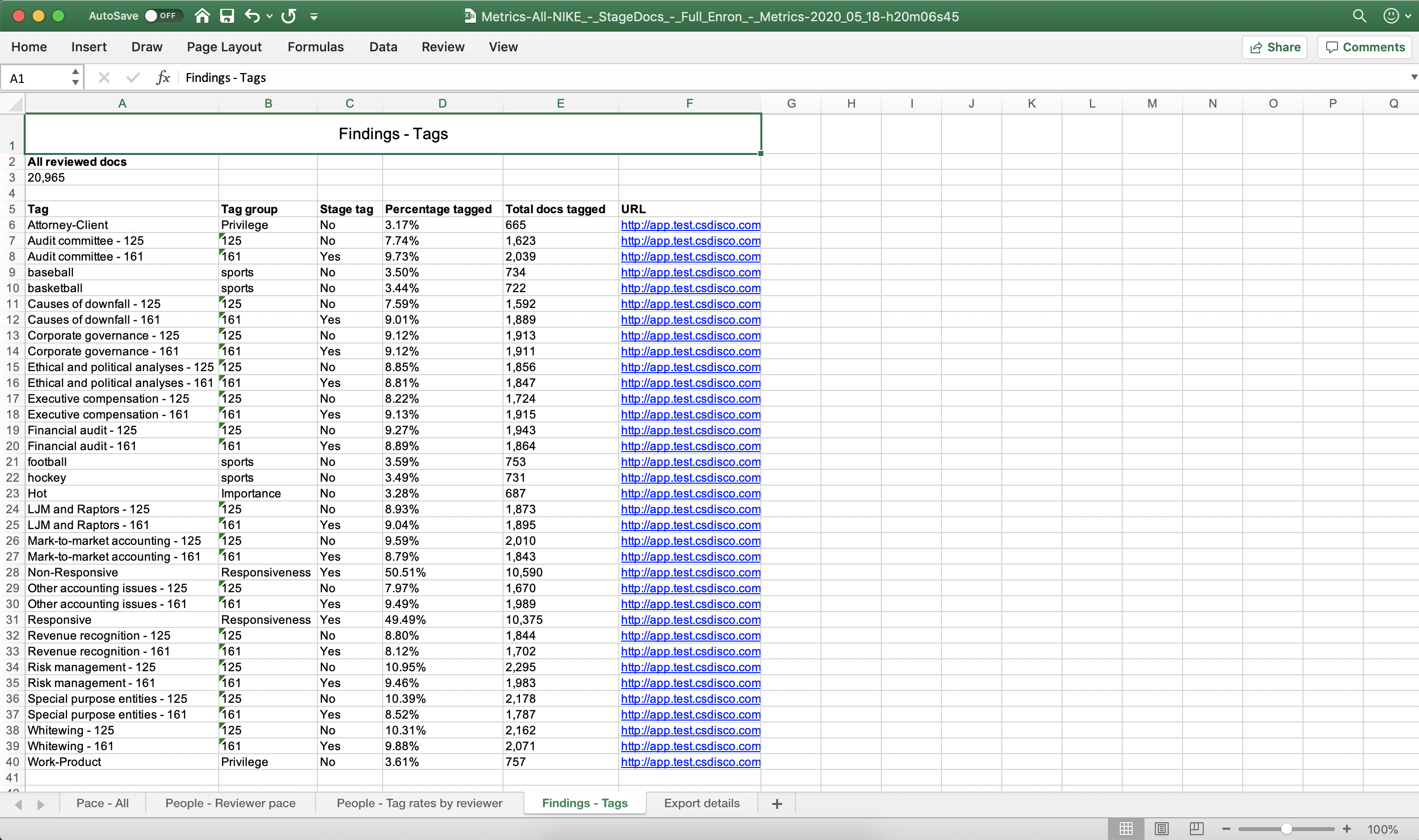

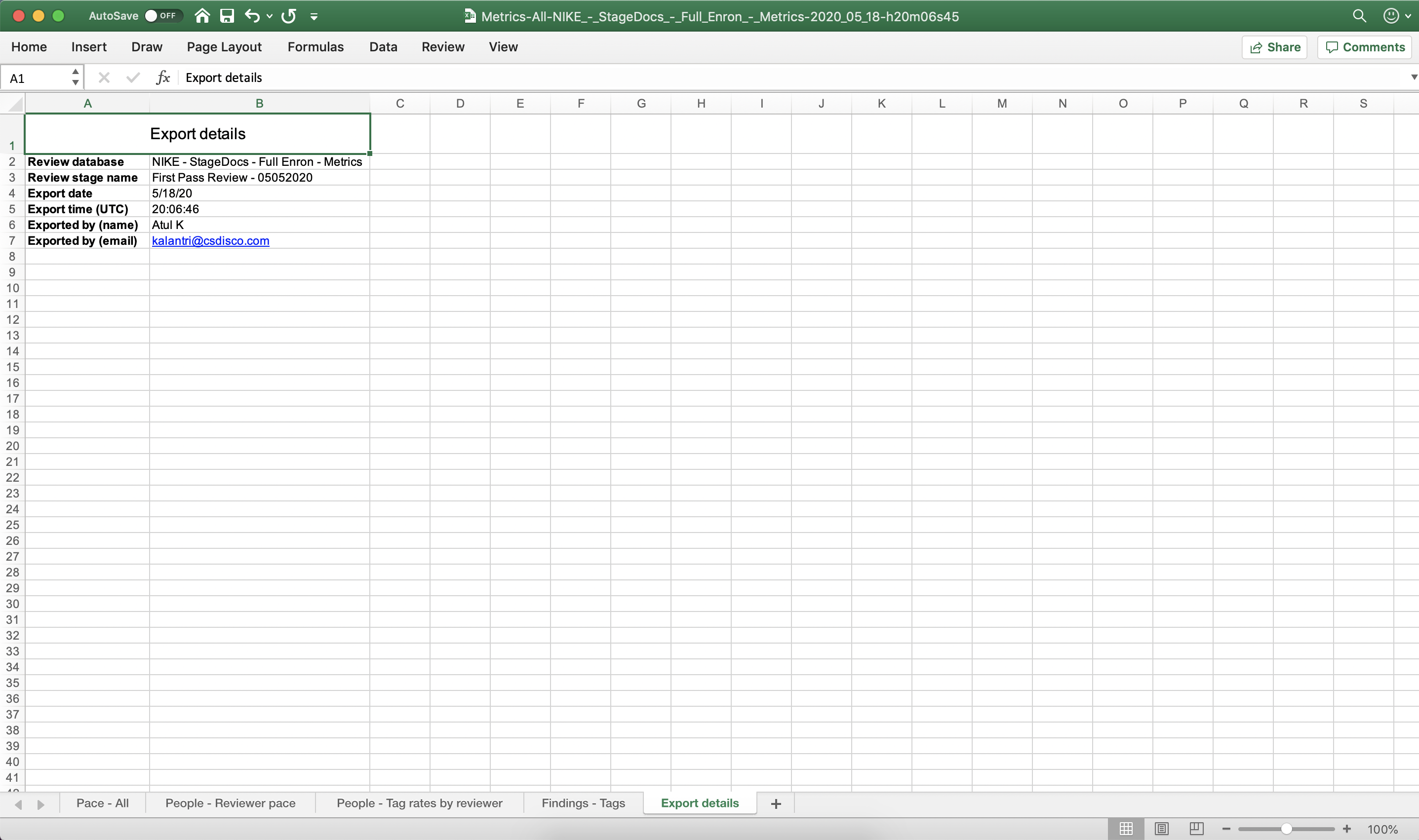

The screenshots below show a sample .xlsx file.

Pace - All sheet

People - Reviewer pace sheet

People - Tag rates by reviewer sheet

Findings - Tags sheet

Export details sheet

Time zones

Review Metrics are reported in the time zone of the Review Database. For example, a database created in central time will have reviewer activity reported in central time, regardless of where reviewers are located. So if a reviewer located in London conducted review activity on April 2nd at 8pm London time, reviewer metric data would report review activity for that time on April 2nd at 2pm central time.

For more information, see Review stages and Using review stages.